Compare commits

2 Commits

manager-v4

...

docs/docs-

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

f367d0b12d | ||

|

|

b7f60c8c26 |

@@ -1 +0,0 @@

|

|||||||

PYPI_TOKEN=your-pypi-token

|

|

||||||

70

.github/workflows/ci.yml

vendored

70

.github/workflows/ci.yml

vendored

@@ -1,70 +0,0 @@

|

|||||||

name: CI

|

|

||||||

|

|

||||||

on:

|

|

||||||

push:

|

|

||||||

branches: [ main, feat/*, fix/* ]

|

|

||||||

pull_request:

|

|

||||||

branches: [ main ]

|

|

||||||

|

|

||||||

jobs:

|

|

||||||

validate-openapi:

|

|

||||||

name: Validate OpenAPI Specification

|

|

||||||

runs-on: ubuntu-latest

|

|

||||||

steps:

|

|

||||||

- uses: actions/checkout@v4

|

|

||||||

|

|

||||||

- name: Check if OpenAPI changed

|

|

||||||

id: openapi-changed

|

|

||||||

uses: tj-actions/changed-files@v44

|

|

||||||

with:

|

|

||||||

files: openapi.yaml

|

|

||||||

|

|

||||||

- name: Setup Node.js

|

|

||||||

if: steps.openapi-changed.outputs.any_changed == 'true'

|

|

||||||

uses: actions/setup-node@v4

|

|

||||||

with:

|

|

||||||

node-version: '18'

|

|

||||||

|

|

||||||

- name: Install Redoc CLI

|

|

||||||

if: steps.openapi-changed.outputs.any_changed == 'true'

|

|

||||||

run: |

|

|

||||||

npm install -g @redocly/cli

|

|

||||||

|

|

||||||

- name: Validate OpenAPI specification

|

|

||||||

if: steps.openapi-changed.outputs.any_changed == 'true'

|

|

||||||

run: |

|

|

||||||

redocly lint openapi.yaml

|

|

||||||

|

|

||||||

code-quality:

|

|

||||||

name: Code Quality Checks

|

|

||||||

runs-on: ubuntu-latest

|

|

||||||

steps:

|

|

||||||

- uses: actions/checkout@v4

|

|

||||||

with:

|

|

||||||

fetch-depth: 0 # Fetch all history for proper diff

|

|

||||||

|

|

||||||

- name: Get changed Python files

|

|

||||||

id: changed-py-files

|

|

||||||

uses: tj-actions/changed-files@v44

|

|

||||||

with:

|

|

||||||

files: |

|

|

||||||

**/*.py

|

|

||||||

files_ignore: |

|

|

||||||

comfyui_manager/legacy/**

|

|

||||||

|

|

||||||

- name: Setup Python

|

|

||||||

if: steps.changed-py-files.outputs.any_changed == 'true'

|

|

||||||

uses: actions/setup-python@v5

|

|

||||||

with:

|

|

||||||

python-version: '3.9'

|

|

||||||

|

|

||||||

- name: Install dependencies

|

|

||||||

if: steps.changed-py-files.outputs.any_changed == 'true'

|

|

||||||

run: |

|

|

||||||

pip install ruff

|

|

||||||

|

|

||||||

- name: Run ruff linting on changed files

|

|

||||||

if: steps.changed-py-files.outputs.any_changed == 'true'

|

|

||||||

run: |

|

|

||||||

echo "Changed files: ${{ steps.changed-py-files.outputs.all_changed_files }}"

|

|

||||||

echo "${{ steps.changed-py-files.outputs.all_changed_files }}" | xargs -r ruff check

|

|

||||||

58

.github/workflows/publish-to-pypi.yml

vendored

58

.github/workflows/publish-to-pypi.yml

vendored

@@ -1,58 +0,0 @@

|

|||||||

name: Publish to PyPI

|

|

||||||

|

|

||||||

on:

|

|

||||||

workflow_dispatch:

|

|

||||||

push:

|

|

||||||

branches:

|

|

||||||

- manager-v4

|

|

||||||

paths:

|

|

||||||

- "pyproject.toml"

|

|

||||||

|

|

||||||

jobs:

|

|

||||||

build-and-publish:

|

|

||||||

runs-on: ubuntu-latest

|

|

||||||

if: ${{ github.repository_owner == 'ltdrdata' || github.repository_owner == 'Comfy-Org' }}

|

|

||||||

steps:

|

|

||||||

- name: Checkout code

|

|

||||||

uses: actions/checkout@v4

|

|

||||||

with:

|

|

||||||

fetch-depth: 0

|

|

||||||

|

|

||||||

- name: Set up Python

|

|

||||||

uses: actions/setup-python@v4

|

|

||||||

with:

|

|

||||||

python-version: '3.x'

|

|

||||||

|

|

||||||

- name: Install build dependencies

|

|

||||||

run: |

|

|

||||||

python -m pip install --upgrade pip

|

|

||||||

python -m pip install build twine

|

|

||||||

|

|

||||||

- name: Get current version

|

|

||||||

id: current_version

|

|

||||||

run: |

|

|

||||||

CURRENT_VERSION=$(grep -oP '^version = "\K[^"]+' pyproject.toml)

|

|

||||||

echo "version=$CURRENT_VERSION" >> $GITHUB_OUTPUT

|

|

||||||

echo "Current version: $CURRENT_VERSION"

|

|

||||||

|

|

||||||

- name: Build package

|

|

||||||

run: python -m build

|

|

||||||

|

|

||||||

# - name: Create GitHub Release

|

|

||||||

# id: create_release

|

|

||||||

# uses: softprops/action-gh-release@v2

|

|

||||||

# env:

|

|

||||||

# GITHUB_TOKEN: ${{ github.token }}

|

|

||||||

# with:

|

|

||||||

# files: dist/*

|

|

||||||

# tag_name: v${{ steps.current_version.outputs.version }}

|

|

||||||

# draft: false

|

|

||||||

# prerelease: false

|

|

||||||

# generate_release_notes: true

|

|

||||||

|

|

||||||

- name: Publish to PyPI

|

|

||||||

uses: pypa/gh-action-pypi-publish@76f52bc884231f62b9a034ebfe128415bbaabdfc

|

|

||||||

with:

|

|

||||||

password: ${{ secrets.PYPI_TOKEN }}

|

|

||||||

skip-existing: true

|

|

||||||

verbose: true

|

|

||||||

25

.github/workflows/publish.yml

vendored

Normal file

25

.github/workflows/publish.yml

vendored

Normal file

@@ -0,0 +1,25 @@

|

|||||||

|

name: Publish to Comfy registry

|

||||||

|

on:

|

||||||

|

workflow_dispatch:

|

||||||

|

push:

|

||||||

|

branches:

|

||||||

|

- main-blocked

|

||||||

|

paths:

|

||||||

|

- "pyproject.toml"

|

||||||

|

|

||||||

|

permissions:

|

||||||

|

issues: write

|

||||||

|

|

||||||

|

jobs:

|

||||||

|

publish-node:

|

||||||

|

name: Publish Custom Node to registry

|

||||||

|

runs-on: ubuntu-latest

|

||||||

|

if: ${{ github.repository_owner == 'ltdrdata' }}

|

||||||

|

steps:

|

||||||

|

- name: Check out code

|

||||||

|

uses: actions/checkout@v4

|

||||||

|

- name: Publish Custom Node

|

||||||

|

uses: Comfy-Org/publish-node-action@v1

|

||||||

|

with:

|

||||||

|

## Add your own personal access token to your Github Repository secrets and reference it here.

|

||||||

|

personal_access_token: ${{ secrets.REGISTRY_ACCESS_TOKEN }}

|

||||||

4

.gitignore

vendored

4

.gitignore

vendored

@@ -18,7 +18,3 @@ pip_overrides.json

|

|||||||

*.json

|

*.json

|

||||||

check2.sh

|

check2.sh

|

||||||

/venv/

|

/venv/

|

||||||

build

|

|

||||||

dist

|

|

||||||

*.egg-info

|

|

||||||

.env

|

|

||||||

@@ -1,47 +0,0 @@

|

|||||||

## Testing Changes

|

|

||||||

|

|

||||||

1. Activate the ComfyUI environment.

|

|

||||||

|

|

||||||

2. Build package locally after making changes.

|

|

||||||

|

|

||||||

```bash

|

|

||||||

# from inside the ComfyUI-Manager directory, with the ComfyUI environment activated

|

|

||||||

python -m build

|

|

||||||

```

|

|

||||||

|

|

||||||

3. Install the package locally in the ComfyUI environment.

|

|

||||||

|

|

||||||

```bash

|

|

||||||

# Uninstall existing package

|

|

||||||

pip uninstall comfyui-manager

|

|

||||||

|

|

||||||

# Install the locale package

|

|

||||||

pip install dist/comfyui-manager-*.whl

|

|

||||||

```

|

|

||||||

|

|

||||||

4. Start ComfyUI.

|

|

||||||

|

|

||||||

```bash

|

|

||||||

# after navigating to the ComfyUI directory

|

|

||||||

python main.py

|

|

||||||

```

|

|

||||||

|

|

||||||

## Manually Publish Test Version to PyPi

|

|

||||||

|

|

||||||

1. Set the `PYPI_TOKEN` environment variable in env file.

|

|

||||||

|

|

||||||

2. If manually publishing, you likely want to use a release candidate version, so set the version in [pyproject.toml](pyproject.toml) to something like `0.0.1rc1`.

|

|

||||||

|

|

||||||

3. Build the package.

|

|

||||||

|

|

||||||

```bash

|

|

||||||

python -m build

|

|

||||||

```

|

|

||||||

|

|

||||||

4. Upload the package to PyPi.

|

|

||||||

|

|

||||||

```bash

|

|

||||||

python -m twine upload dist/* --username __token__ --password $PYPI_TOKEN

|

|

||||||

```

|

|

||||||

|

|

||||||

5. View at https://pypi.org/project/comfyui-manager/

|

|

||||||

14

MANIFEST.in

14

MANIFEST.in

@@ -1,14 +0,0 @@

|

|||||||

include comfyui_manager/js/*

|

|

||||||

include comfyui_manager/*.json

|

|

||||||

include comfyui_manager/glob/*

|

|

||||||

include LICENSE.txt

|

|

||||||

include README.md

|

|

||||||

include requirements.txt

|

|

||||||

include pyproject.toml

|

|

||||||

include custom-node-list.json

|

|

||||||

include extension-node-list.json

|

|

||||||

include extras.json

|

|

||||||

include github-stats.json

|

|

||||||

include model-list.json

|

|

||||||

include alter-list.json

|

|

||||||

include comfyui_manager/channels.list.template

|

|

||||||

176

README.md

176

README.md

@@ -5,35 +5,86 @@

|

|||||||

|

|

||||||

|

|

||||||

## NOTICE

|

## NOTICE

|

||||||

* V4.0: Modify the structure to be installable via pip instead of using git clone.

|

|

||||||

* V3.16: Support for `uv` has been added. Set `use_uv` in `config.ini`.

|

* V3.16: Support for `uv` has been added. Set `use_uv` in `config.ini`.

|

||||||

* V3.10: `double-click feature` is removed

|

* V3.10: `double-click feature` is removed

|

||||||

* This feature has been moved to https://github.com/ltdrdata/comfyui-connection-helper

|

* This feature has been moved to https://github.com/ltdrdata/comfyui-connection-helper

|

||||||

* V3.3.2: Overhauled. Officially supports [https://registry.comfy.org/](https://registry.comfy.org/).

|

* V3.3.2: Overhauled. Officially supports [https://comfyregistry.org/](https://comfyregistry.org/).

|

||||||

* You can see whole nodes info on [ComfyUI Nodes Info](https://ltdrdata.github.io/) page.

|

* You can see whole nodes info on [ComfyUI Nodes Info](https://ltdrdata.github.io/) page.

|

||||||

|

|

||||||

## Installation

|

## Installation

|

||||||

|

|

||||||

* When installing the latest ComfyUI, it will be automatically installed as a dependency, so manual installation is no longer necessary.

|

### Installation[method1] (General installation method: ComfyUI-Manager only)

|

||||||

|

|

||||||

* Manual installation of the nightly version:

|

To install ComfyUI-Manager in addition to an existing installation of ComfyUI, you can follow the following steps:

|

||||||

* Clone to a temporary directory (**Note:** Do **not** clone into `ComfyUI/custom_nodes`.)

|

|

||||||

```

|

|

||||||

git clone https://github.com/Comfy-Org/ComfyUI-Manager

|

|

||||||

```

|

|

||||||

* Install via pip

|

|

||||||

```

|

|

||||||

cd ComfyUI-Manager

|

|

||||||

pip install .

|

|

||||||

```

|

|

||||||

|

|

||||||

|

1. goto `ComfyUI/custom_nodes` dir in terminal(cmd)

|

||||||

|

2. `git clone https://github.com/ltdrdata/ComfyUI-Manager comfyui-manager`

|

||||||

|

3. Restart ComfyUI

|

||||||

|

|

||||||

|

|

||||||

|

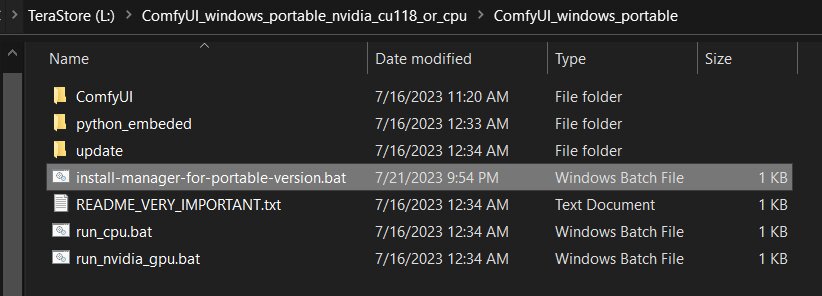

### Installation[method2] (Installation for portable ComfyUI version: ComfyUI-Manager only)

|

||||||

|

1. install git

|

||||||

|

- https://git-scm.com/download/win

|

||||||

|

- standalone version

|

||||||

|

- select option: use windows default console window

|

||||||

|

2. Download [scripts/install-manager-for-portable-version.bat](https://github.com/ltdrdata/ComfyUI-Manager/raw/main/scripts/install-manager-for-portable-version.bat) into installed `"ComfyUI_windows_portable"` directory

|

||||||

|

- Don't click. Right click the link and use save as...

|

||||||

|

3. double click `install-manager-for-portable-version.bat` batch file

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

### Installation[method3] (Installation through comfy-cli: install ComfyUI and ComfyUI-Manager at once.)

|

||||||

|

> RECOMMENDED: comfy-cli provides various features to manage ComfyUI from the CLI.

|

||||||

|

|

||||||

|

* **prerequisite: python 3, git**

|

||||||

|

|

||||||

|

Windows:

|

||||||

|

```commandline

|

||||||

|

python -m venv venv

|

||||||

|

venv\Scripts\activate

|

||||||

|

pip install comfy-cli

|

||||||

|

comfy install

|

||||||

|

```

|

||||||

|

|

||||||

|

Linux/OSX:

|

||||||

|

```commandline

|

||||||

|

python -m venv venv

|

||||||

|

. venv/bin/activate

|

||||||

|

pip install comfy-cli

|

||||||

|

comfy install

|

||||||

|

```

|

||||||

* See also: https://github.com/Comfy-Org/comfy-cli

|

* See also: https://github.com/Comfy-Org/comfy-cli

|

||||||

|

|

||||||

|

|

||||||

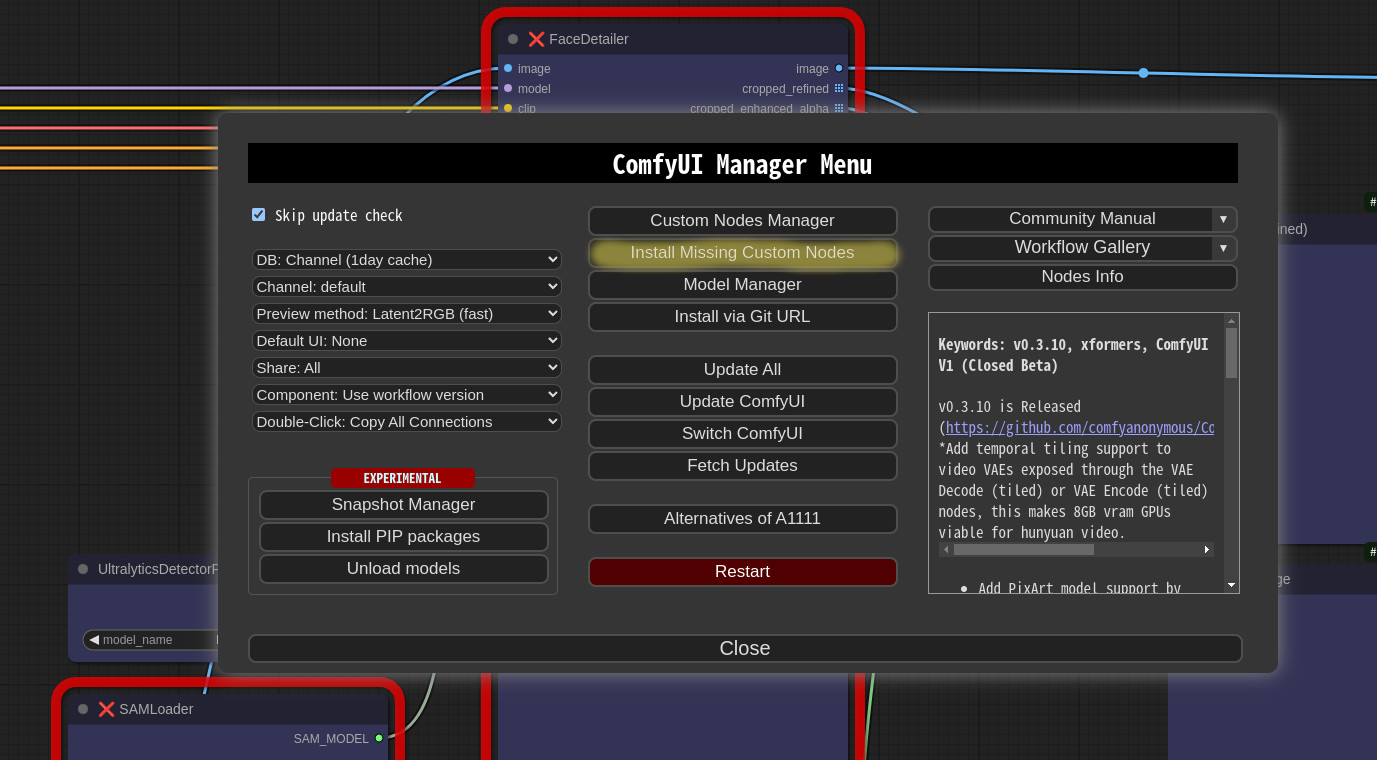

## Front-end

|

### Installation[method4] (Installation for linux+venv: ComfyUI + ComfyUI-Manager)

|

||||||

|

|

||||||

* The built-in front-end of ComfyUI-Manager is the legacy front-end. The front-end for ComfyUI-Manager is now provided via [ComfyUI Frontend](https://github.com/Comfy-Org/ComfyUI_frontend).

|

To install ComfyUI with ComfyUI-Manager on Linux using a venv environment, you can follow these steps:

|

||||||

* To enable the legacy front-end, set the environment variable `ENABLE_LEGACY_COMFYUI_MANAGER_FRONT` to `true` before running.

|

* **prerequisite: python-is-python3, python3-venv, git**

|

||||||

|

|

||||||

|

1. Download [scripts/install-comfyui-venv-linux.sh](https://github.com/ltdrdata/ComfyUI-Manager/raw/main/scripts/install-comfyui-venv-linux.sh) into empty install directory

|

||||||

|

- Don't click. Right click the link and use save as...

|

||||||

|

- ComfyUI will be installed in the subdirectory of the specified directory, and the directory will contain the generated executable script.

|

||||||

|

2. `chmod +x install-comfyui-venv-linux.sh`

|

||||||

|

3. `./install-comfyui-venv-linux.sh`

|

||||||

|

|

||||||

|

### Installation Precautions

|

||||||

|

* **DO**: `ComfyUI-Manager` files must be accurately located in the path `ComfyUI/custom_nodes/comfyui-manager`

|

||||||

|

* Installing in a compressed file format is not recommended.

|

||||||

|

* **DON'T**: Decompress directly into the `ComfyUI/custom_nodes` location, resulting in the Manager contents like `__init__.py` being placed directly in that directory.

|

||||||

|

* You have to remove all ComfyUI-Manager files from `ComfyUI/custom_nodes`

|

||||||

|

* **DON'T**: In a form where decompression occurs in a path such as `ComfyUI/custom_nodes/ComfyUI-Manager/ComfyUI-Manager`.

|

||||||

|

* **DON'T**: In a form where decompression occurs in a path such as `ComfyUI/custom_nodes/ComfyUI-Manager-main`.

|

||||||

|

* In such cases, `ComfyUI-Manager` may operate, but it won't be recognized within `ComfyUI-Manager`, and updates cannot be performed. It also poses the risk of duplicate installations. Remove it and install properly via `git clone` method.

|

||||||

|

|

||||||

|

|

||||||

|

You can execute ComfyUI by running either `./run_gpu.sh` or `./run_cpu.sh` depending on your system configuration.

|

||||||

|

|

||||||

|

## Colab Notebook

|

||||||

|

This repository provides Colab notebooks that allow you to install and use ComfyUI, including ComfyUI-Manager. To use ComfyUI, [click on this link](https://colab.research.google.com/github/ltdrdata/ComfyUI-Manager/blob/main/notebooks/comfyui_colab_with_manager.ipynb).

|

||||||

|

* Support for installing ComfyUI

|

||||||

|

* Support for basic installation of ComfyUI-Manager

|

||||||

|

* Support for automatically installing dependencies of custom nodes upon restarting Colab notebooks.

|

||||||

|

|

||||||

|

|

||||||

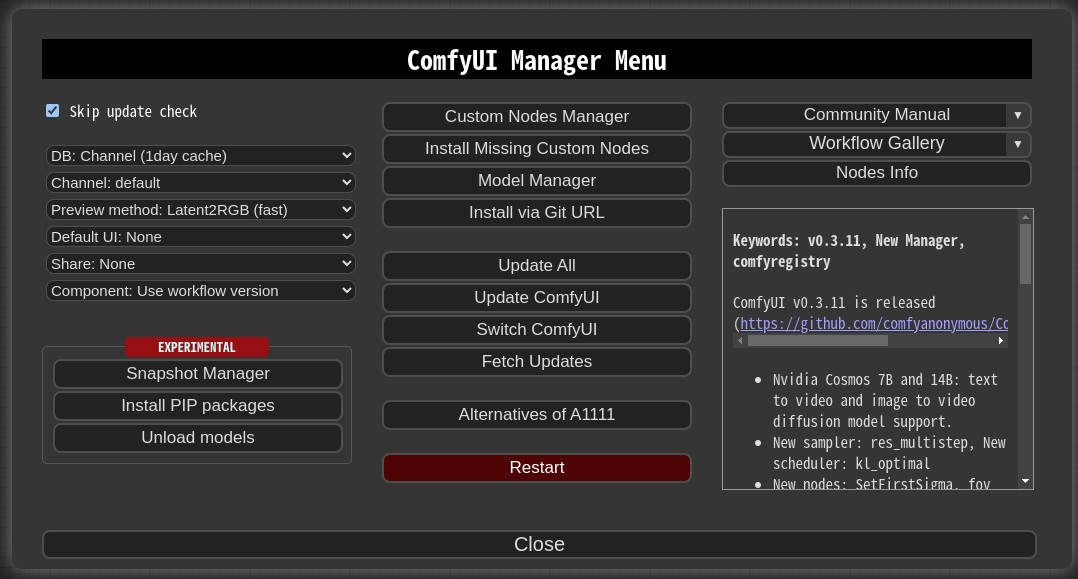

## How To Use

|

## How To Use

|

||||||

@@ -89,20 +140,20 @@

|

|||||||

|

|

||||||

|

|

||||||

## Paths

|

## Paths

|

||||||

In `ComfyUI-Manager` V4.0.3b4 and later, configuration files and dynamically generated files are located under `<USER_DIRECTORY>/__manager/`.

|

In `ComfyUI-Manager` V3.0 and later, configuration files and dynamically generated files are located under `<USER_DIRECTORY>/default/ComfyUI-Manager/`.

|

||||||

|

|

||||||

* <USER_DIRECTORY>

|

* <USER_DIRECTORY>

|

||||||

* If executed without any options, the path defaults to ComfyUI/user.

|

* If executed without any options, the path defaults to ComfyUI/user.

|

||||||

* It can be set using --user-directory <USER_DIRECTORY>.

|

* It can be set using --user-directory <USER_DIRECTORY>.

|

||||||

|

|

||||||

* Basic config files: `<USER_DIRECTORY>/__manager/config.ini`

|

* Basic config files: `<USER_DIRECTORY>/default/ComfyUI-Manager/config.ini`

|

||||||

* Configurable channel lists: `<USER_DIRECTORY>/__manager/channels.ini`

|

* Configurable channel lists: `<USER_DIRECTORY>/default/ComfyUI-Manager/channels.ini`

|

||||||

* Configurable pip overrides: `<USER_DIRECTORY>/__manager/pip_overrides.json`

|

* Configurable pip overrides: `<USER_DIRECTORY>/default/ComfyUI-Manager/pip_overrides.json`

|

||||||

* Configurable pip blacklist: `<USER_DIRECTORY>/__manager/pip_blacklist.list`

|

* Configurable pip blacklist: `<USER_DIRECTORY>/default/ComfyUI-Manager/pip_blacklist.list`

|

||||||

* Configurable pip auto fix: `<USER_DIRECTORY>/__manager/pip_auto_fix.list`

|

* Configurable pip auto fix: `<USER_DIRECTORY>/default/ComfyUI-Manager/pip_auto_fix.list`

|

||||||

* Saved snapshot files: `<USER_DIRECTORY>/__manager/snapshots`

|

* Saved snapshot files: `<USER_DIRECTORY>/default/ComfyUI-Manager/snapshots`

|

||||||

* Startup script files: `<USER_DIRECTORY>/__manager/startup-scripts`

|

* Startup script files: `<USER_DIRECTORY>/default/ComfyUI-Manager/startup-scripts`

|

||||||

* Component files: `<USER_DIRECTORY>/__manager/components`

|

* Component files: `<USER_DIRECTORY>/default/ComfyUI-Manager/components`

|

||||||

|

|

||||||

|

|

||||||

## `extra_model_paths.yaml` Configuration

|

## `extra_model_paths.yaml` Configuration

|

||||||

@@ -115,17 +166,17 @@ The following settings are applied based on the section marked as `is_default`.

|

|||||||

|

|

||||||

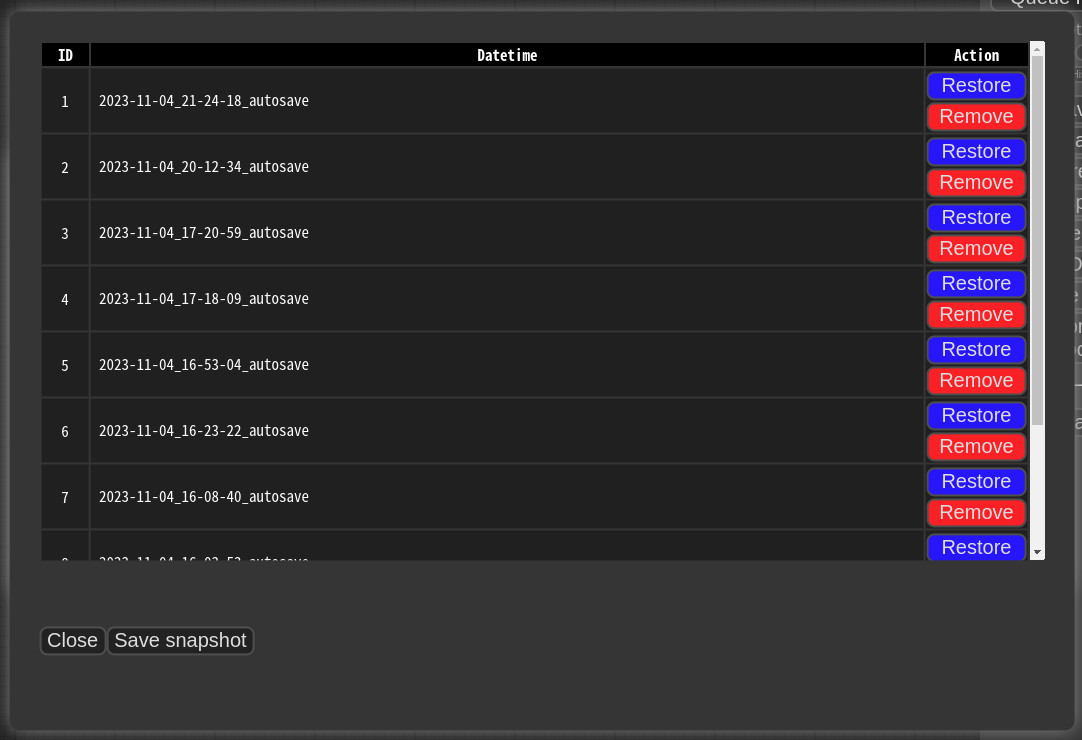

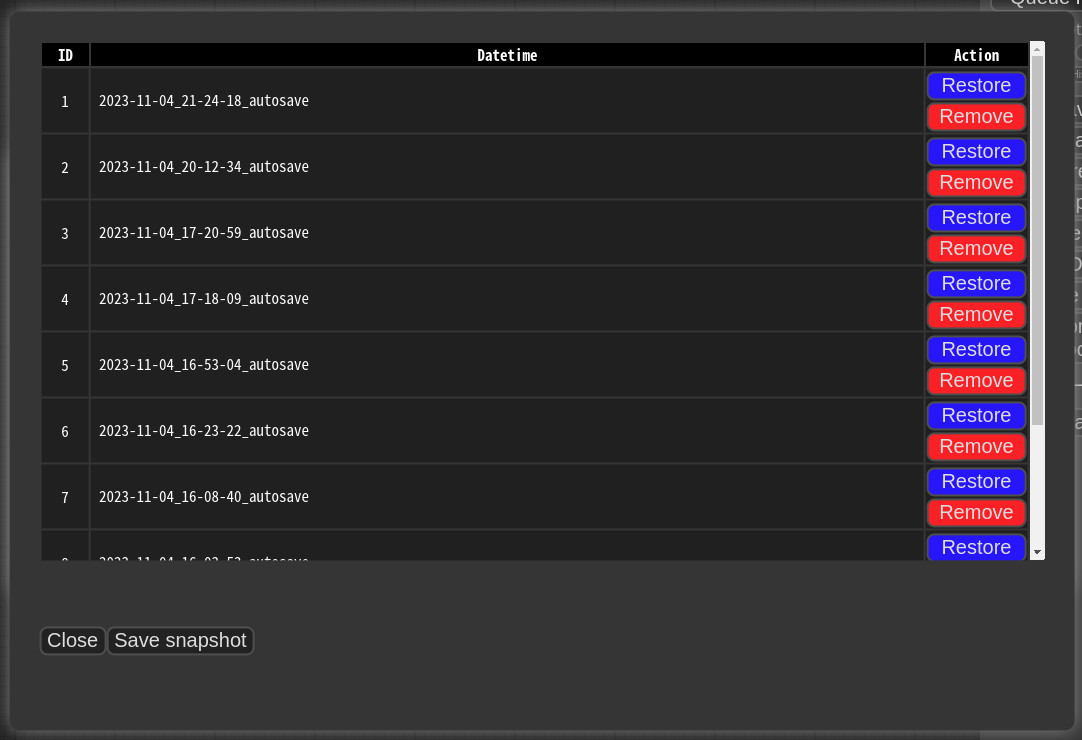

## Snapshot-Manager

|

## Snapshot-Manager

|

||||||

* When you press `Save snapshot` or use `Update All` on `Manager Menu`, the current installation status snapshot is saved.

|

* When you press `Save snapshot` or use `Update All` on `Manager Menu`, the current installation status snapshot is saved.

|

||||||

* Snapshot file dir: `<USER_DIRECTORY>/__manager/snapshots`

|

* Snapshot file dir: `<USER_DIRECTORY>/default/ComfyUI-Manager/snapshots`

|

||||||

* You can rename snapshot file.

|

* You can rename snapshot file.

|

||||||

* Press the "Restore" button to revert to the installation status of the respective snapshot.

|

* Press the "Restore" button to revert to the installation status of the respective snapshot.

|

||||||

* However, for custom nodes not managed by Git, snapshot support is incomplete.

|

* However, for custom nodes not managed by Git, snapshot support is incomplete.

|

||||||

* When you press `Restore`, it will take effect on the next ComfyUI startup.

|

* When you press `Restore`, it will take effect on the next ComfyUI startup.

|

||||||

* The selected snapshot file is saved in `<USER_DIRECTORY>/__manager/startup-scripts/restore-snapshot.json`, and upon restarting ComfyUI, the snapshot is applied and then deleted.

|

* The selected snapshot file is saved in `<USER_DIRECTORY>/default/ComfyUI-Manager/startup-scripts/restore-snapshot.json`, and upon restarting ComfyUI, the snapshot is applied and then deleted.

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

## cm-cli: command line tools for power users

|

## cm-cli: command line tools for power user

|

||||||

* A tool is provided that allows you to use the features of ComfyUI-Manager without running ComfyUI.

|

* A tool is provided that allows you to use the features of ComfyUI-Manager without running ComfyUI.

|

||||||

* For more details, please refer to the [cm-cli documentation](docs/en/cm-cli.md).

|

* For more details, please refer to the [cm-cli documentation](docs/en/cm-cli.md).

|

||||||

|

|

||||||

@@ -169,12 +220,12 @@ The following settings are applied based on the section marked as `is_default`.

|

|||||||

}

|

}

|

||||||

```

|

```

|

||||||

* `<current timestamp>` Ensure that the timestamp is always unique.

|

* `<current timestamp>` Ensure that the timestamp is always unique.

|

||||||

* "components" should have the same structure as the content of the file stored in `<USER_DIRECTORY>/__manager/components`.

|

* "components" should have the same structure as the content of the file stored in `<USER_DIRECTORY>/default/ComfyUI-Manager/components`.

|

||||||

* `<component name>`: The name should be in the format `<prefix>::<node name>`.

|

* `<component name>`: The name should be in the format `<prefix>::<node name>`.

|

||||||

* `<component node data>`: In the node data of the group node.

|

* `<compnent nodeata>`: In the nodedata of the group node.

|

||||||

* `<version>`: Only two formats are allowed: `major.minor.patch` or `major.minor`. (e.g. `1.0`, `2.2.1`)

|

* `<version>`: Only two formats are allowed: `major.minor.patch` or `major.minor`. (e.g. `1.0`, `2.2.1`)

|

||||||

* `<datetime>`: Saved time

|

* `<datetime>`: Saved time

|

||||||

* `<packname>`: If the packname is not empty, the category becomes packname/workflow, and it is saved in the <packname>.pack file in `<USER_DIRECTORY>/__manager/components`.

|

* `<packname>`: If the packname is not empty, the category becomes packname/workflow, and it is saved in the <packname>.pack file in `<USER_DIRECTORY>/default/ComfyUI-Manager/components`.

|

||||||

* `<category>`: If there is neither a category nor a packname, it is saved in the components category.

|

* `<category>`: If there is neither a category nor a packname, it is saved in the components category.

|

||||||

```

|

```

|

||||||

"version":"1.0",

|

"version":"1.0",

|

||||||

@@ -189,7 +240,7 @@ The following settings are applied based on the section marked as `is_default`.

|

|||||||

* Dragging and dropping or pasting a single component will add a node. However, when adding multiple components, nodes will not be added.

|

* Dragging and dropping or pasting a single component will add a node. However, when adding multiple components, nodes will not be added.

|

||||||

|

|

||||||

|

|

||||||

## Support for installing missing nodes

|

## Support of missing nodes installation

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

@@ -215,24 +266,23 @@ The following settings are applied based on the section marked as `is_default`.

|

|||||||

downgrade_blacklist = <Set a list of packages to prevent downgrades. List them separated by commas.>

|

downgrade_blacklist = <Set a list of packages to prevent downgrades. List them separated by commas.>

|

||||||

security_level = <Set the security level => strong|normal|normal-|weak>

|

security_level = <Set the security level => strong|normal|normal-|weak>

|

||||||

always_lazy_install = <Whether to perform dependency installation on restart even in environments other than Windows.>

|

always_lazy_install = <Whether to perform dependency installation on restart even in environments other than Windows.>

|

||||||

network_mode = <Set the network mode => public|private|offline|personal_cloud>

|

network_mode = <Set the network mode => public|private|offline>

|

||||||

```

|

```

|

||||||

|

|

||||||

* network_mode:

|

* network_mode:

|

||||||

- public: An environment that uses a typical public network.

|

- public: An environment that uses a typical public network.

|

||||||

- private: An environment that uses a closed network, where a private node DB is configured via `channel_url`. (Uses cache if available)

|

- private: An environment that uses a closed network, where a private node DB is configured via `channel_url`. (Uses cache if available)

|

||||||

- offline: An environment that does not use any external connections when using an offline network. (Uses cache if available)

|

- offline: An environment that does not use any external connections when using an offline network. (Uses cache if available)

|

||||||

- personal_cloud: Applies relaxed security features in cloud environments such as Google Colab or Runpod, where strong security is not required.

|

|

||||||

|

|

||||||

|

|

||||||

## Additional Feature

|

## Additional Feature

|

||||||

* Logging to file feature

|

* Logging to file feature

|

||||||

* This feature is enabled by default and can be disabled by setting `file_logging = False` in the `config.ini`.

|

* This feature is enabled by default and can be disabled by setting `file_logging = False` in the `config.ini`.

|

||||||

|

|

||||||

* Fix node (recreate): When right-clicking on a node and selecting `Fix node (recreate)`, you can recreate the node. The widget's values are reset, while the connections maintain those with the same names.

|

* Fix node(recreate): When right-clicking on a node and selecting `Fix node (recreate)`, you can recreate the node. The widget's values are reset, while the connections maintain those with the same names.

|

||||||

* It is used to correct errors in nodes of old workflows created before, which are incompatible with the version changes of custom nodes.

|

* It is used to correct errors in nodes of old workflows created before, which are incompatible with the version changes of custom nodes.

|

||||||

|

|

||||||

* Double-Click Node Title: You can set the double-click behavior of nodes in the ComfyUI-Manager menu.

|

* Double-Click Node Title: You can set the double click behavior of nodes in the ComfyUI-Manager menu.

|

||||||

* `Copy All Connections`, `Copy Input Connections`: Double-clicking a node copies the connections of the nearest node.

|

* `Copy All Connections`, `Copy Input Connections`: Double-clicking a node copies the connections of the nearest node.

|

||||||

* This action targets the nearest node within a straight-line distance of 1000 pixels from the center of the node.

|

* This action targets the nearest node within a straight-line distance of 1000 pixels from the center of the node.

|

||||||

* In the case of `Copy All Connections`, it duplicates existing outputs, but since it does not allow duplicate connections, the existing output connections of the original node are disconnected.

|

* In the case of `Copy All Connections`, it duplicates existing outputs, but since it does not allow duplicate connections, the existing output connections of the original node are disconnected.

|

||||||

@@ -298,48 +348,46 @@ When you run the `scan.sh` script:

|

|||||||

|

|

||||||

* It updates the `github-stats.json`.

|

* It updates the `github-stats.json`.

|

||||||

* This uses the GitHub API, so set your token with `export GITHUB_TOKEN=your_token_here` to avoid quickly reaching the rate limit and malfunctioning.

|

* This uses the GitHub API, so set your token with `export GITHUB_TOKEN=your_token_here` to avoid quickly reaching the rate limit and malfunctioning.

|

||||||

* To skip this step, add the `--skip-stat-update` option.

|

* To skip this step, add the `--skip-update-stat` option.

|

||||||

|

|

||||||

* The `--skip-all` option applies both `--skip-update` and `--skip-stat-update`.

|

* The `--skip-all` option applies both `--skip-update` and `--skip-stat-update`.

|

||||||

|

|

||||||

|

|

||||||

## Troubleshooting

|

## Troubleshooting

|

||||||

* If your `git.exe` is installed in a specific location other than system git, please install ComfyUI-Manager and run ComfyUI. Then, specify the path including the file name in `git_exe = ` in the `<USER_DIRECTORY>/__manager/config.ini` file that is generated.

|

* If your `git.exe` is installed in a specific location other than system git, please install ComfyUI-Manager and run ComfyUI. Then, specify the path including the file name in `git_exe = ` in the `<USER_DIRECTORY>/default/ComfyUI-Manager/config.ini` file that is generated.

|

||||||

* If updating ComfyUI-Manager itself fails, please go to the **ComfyUI-Manager** directory and execute the command `git update-ref refs/remotes/origin/main a361cc1 && git fetch --all && git pull`.

|

* If updating ComfyUI-Manager itself fails, please go to the **ComfyUI-Manager** directory and execute the command `git update-ref refs/remotes/origin/main a361cc1 && git fetch --all && git pull`.

|

||||||

* If you encounter the error message `Overlapped Object has pending operation at deallocation on ComfyUI Manager load` under Windows

|

* If you encounter the error message `Overlapped Object has pending operation at deallocation on Comfyui Manager load` under Windows

|

||||||

* Edit `config.ini` file: add `windows_selector_event_loop_policy = True`

|

* Edit `config.ini` file: add `windows_selector_event_loop_policy = True`

|

||||||

* If the `SSL: CERTIFICATE_VERIFY_FAILED` error occurs.

|

* if `SSL: CERTIFICATE_VERIFY_FAILED` error is occured.

|

||||||

* Edit `config.ini` file: add `bypass_ssl = True`

|

* Edit `config.ini` file: add `bypass_ssl = True`

|

||||||

|

|

||||||

|

|

||||||

## Security policy

|

## Security policy

|

||||||

|

* Edit `config.ini` file: add `security_level = <LEVEL>`

|

||||||

|

* `strong`

|

||||||

|

* doesn't allow `high` and `middle` level risky feature

|

||||||

|

* `normal`

|

||||||

|

* doesn't allow `high` level risky feature

|

||||||

|

* `middle` level risky feature is available

|

||||||

|

* `normal-`

|

||||||

|

* doesn't allow `high` level risky feature if `--listen` is specified and not starts with `127.`

|

||||||

|

* `middle` level risky feature is available

|

||||||

|

* `weak`

|

||||||

|

* all feature is available

|

||||||

|

|

||||||

The security settings are applied based on whether the ComfyUI server's listener is non-local and whether the network mode is set to `personal_cloud`.

|

* `high` level risky features

|

||||||

|

* `Install via git url`, `pip install`

|

||||||

|

* Installation of custom nodes registered not in the `default channel`.

|

||||||

|

* Fix custom nodes

|

||||||

|

|

||||||

* **non-local**: When the server is launched with `--listen` and is bound to a network range other than the local `127.` range, allowing remote IP access.

|

* `middle` level risky features

|

||||||

* **personal\_cloud**: When the `network_mode` is set to `personal_cloud`.

|

* Uninstall/Update

|

||||||

|

* Installation of custom nodes registered in the `default channel`.

|

||||||

|

* Restore/Remove Snapshot

|

||||||

### Risky Level Table

|

* Restart

|

||||||

|

|

||||||

| Risky Level | features |

|

|

||||||

|-------------|---------------------------------------------------------------------------------------------------------------------------------------|

|

|

||||||

| high+ | * `Install via git url`, `pip install`<BR>* Installation of nodepack registered not in the `default channel`. |

|

|

||||||

| high | * Fix nodepack |

|

|

||||||

| middle+ | * Uninstall/Update<BR>* Installation of nodepack registered in the `default channel`.<BR>* Restore/Remove Snapshot<BR>* Install model |

|

|

||||||

| middle | * Restart |

|

|

||||||

| low | * Update ComfyUI |

|

|

||||||

|

|

||||||

|

|

||||||

### Security Level Table

|

|

||||||

|

|

||||||

| Security Level | local | non-local (personal_cloud) | non-local (not personal_cloud) |

|

|

||||||

|----------------|--------------------------------------------------------------------------------------------------------------------------|--------------------------------------------------------------------------------------------------------------------------|--------------------------------|

|

|

||||||

| strong | * Only `weak` level risky features are allowed | * Only `weak` level risky features are allowed | * Only `weak` level risky features are allowed |

|

|

||||||

| normal | * `high+` and `high` level risky features are not allowed<BR>* `middle+` and `middle` level risky features are available | * `high+` and `high` level risky features are not allowed<BR>* `middle+` and `middle` level risky features are available | * `high+`, `high` and `middle+` level risky features are not allowed<BR>* `middle` level risky features are available

|

|

||||||

| normal- | * All features are available | * `high+` and `high` level risky features are not allowed<BR>* `middle+` and `middle` level risky features are available | * `high+`, `high` and `middle+` level risky features are not allowed<BR>* `middle` level risky features are available

|

|

||||||

| weak | * All features are available | * All features are available | * `high+` and `middle+` level risky features are not allowed<BR>* `high`, `middle` and `low` level risky features are available

|

|

||||||

|

|

||||||

|

* `low` level risky features

|

||||||

|

* Update ComfyUI

|

||||||

|

|

||||||

|

|

||||||

# Disclaimer

|

# Disclaimer

|

||||||

|

|||||||

25

__init__.py

Normal file

25

__init__.py

Normal file

@@ -0,0 +1,25 @@

|

|||||||

|

"""

|

||||||

|

This file is the entry point for the ComfyUI-Manager package, handling CLI-only mode and initial setup.

|

||||||

|

"""

|

||||||

|

|

||||||

|

import os

|

||||||

|

import sys

|

||||||

|

|

||||||

|

cli_mode_flag = os.path.join(os.path.dirname(__file__), '.enable-cli-only-mode')

|

||||||

|

|

||||||

|

if not os.path.exists(cli_mode_flag):

|

||||||

|

sys.path.append(os.path.join(os.path.dirname(__file__), "glob"))

|

||||||

|

import manager_server # noqa: F401

|

||||||

|

import share_3rdparty # noqa: F401

|

||||||

|

import cm_global

|

||||||

|

|

||||||

|

if not cm_global.disable_front and not 'DISABLE_COMFYUI_MANAGER_FRONT' in os.environ:

|

||||||

|

WEB_DIRECTORY = "js"

|

||||||

|

else:

|

||||||

|

print("\n[ComfyUI-Manager] !! cli-only-mode is enabled !!\n")

|

||||||

|

|

||||||

|

NODE_CLASS_MAPPINGS = {}

|

||||||

|

__all__ = ['NODE_CLASS_MAPPINGS']

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

6

channels.list.template

Normal file

6

channels.list.template

Normal file

@@ -0,0 +1,6 @@

|

|||||||

|

default::https://raw.githubusercontent.com/ltdrdata/ComfyUI-Manager/main

|

||||||

|

recent::https://raw.githubusercontent.com/ltdrdata/ComfyUI-Manager/main/node_db/new

|

||||||

|

legacy::https://raw.githubusercontent.com/ltdrdata/ComfyUI-Manager/main/node_db/legacy

|

||||||

|

forked::https://raw.githubusercontent.com/ltdrdata/ComfyUI-Manager/main/node_db/forked

|

||||||

|

dev::https://raw.githubusercontent.com/ltdrdata/ComfyUI-Manager/main/node_db/dev

|

||||||

|

tutorial::https://raw.githubusercontent.com/ltdrdata/ComfyUI-Manager/main/node_db/tutorial

|

||||||

4

check.sh

4

check.sh

@@ -37,7 +37,7 @@ find ~/.tmp/default -name "*.py" -print0 | xargs -0 grep -E "crypto|^_A="

|

|||||||

|

|

||||||

echo

|

echo

|

||||||

echo CHECK3

|

echo CHECK3

|

||||||

find ~/.tmp/default -name "requirements.txt" | xargs grep "^\s*[^#]*https\?:"

|

find ~/.tmp/default -name "requirements.txt" | xargs grep "^\s*https\\?:"

|

||||||

find ~/.tmp/default -name "requirements.txt" | xargs grep "^\s*[^#].*\.whl"

|

find ~/.tmp/default -name "requirements.txt" | xargs grep "\.whl"

|

||||||

|

|

||||||

echo

|

echo

|

||||||

|

|||||||

@@ -15,38 +15,41 @@ import git

|

|||||||

import importlib

|

import importlib

|

||||||

|

|

||||||

|

|

||||||

from ..common import manager_util

|

sys.path.append(os.path.dirname(__file__))

|

||||||

|

sys.path.append(os.path.join(os.path.dirname(__file__), "glob"))

|

||||||

|

|

||||||

|

import manager_util

|

||||||

|

|

||||||

# read env vars

|

# read env vars

|

||||||

# COMFYUI_FOLDERS_BASE_PATH is not required in cm-cli.py

|

# COMFYUI_FOLDERS_BASE_PATH is not required in cm-cli.py

|

||||||

# `comfy_path` should be resolved before importing manager_core

|

# `comfy_path` should be resolved before importing manager_core

|

||||||

|

|

||||||

comfy_path = os.environ.get('COMFYUI_PATH')

|

comfy_path = os.environ.get('COMFYUI_PATH')

|

||||||

|

|

||||||

if comfy_path is None:

|

if comfy_path is None:

|

||||||

print("[bold red]cm-cli: environment variable 'COMFYUI_PATH' is not specified.[/bold red]")

|

try:

|

||||||

exit(-1)

|

import folder_paths

|

||||||

|

comfy_path = os.path.join(os.path.dirname(folder_paths.__file__))

|

||||||

|

except:

|

||||||

|

print("\n[bold yellow]WARN: The `COMFYUI_PATH` environment variable is not set. Assuming `custom_nodes/ComfyUI-Manager/../../` as the ComfyUI path.[/bold yellow]", file=sys.stderr)

|

||||||

|

comfy_path = os.path.abspath(os.path.join(manager_util.comfyui_manager_path, '..', '..'))

|

||||||

|

|

||||||

|

# This should be placed here

|

||||||

sys.path.append(comfy_path)

|

sys.path.append(comfy_path)

|

||||||

|

|

||||||

if not os.path.exists(os.path.join(comfy_path, 'folder_paths.py')):

|

|

||||||

print("[bold red]cm-cli: '{comfy_path}' is not a valid 'COMFYUI_PATH' location.[/bold red]")

|

|

||||||

exit(-1)

|

|

||||||

|

|

||||||

|

|

||||||

import utils.extra_config

|

import utils.extra_config

|

||||||

from ..common import cm_global

|

import cm_global

|

||||||

from ..legacy import manager_core as core

|

import manager_core as core

|

||||||

from ..common import context

|

from manager_core import unified_manager

|

||||||

from ..legacy.manager_core import unified_manager

|

import cnr_utils

|

||||||

from ..common import cnr_utils

|

|

||||||

|

|

||||||

comfyui_manager_path = os.path.abspath(os.path.dirname(__file__))

|

comfyui_manager_path = os.path.abspath(os.path.dirname(__file__))

|

||||||

|

|

||||||

cm_global.pip_blacklist = {'torch', 'torchaudio', 'torchsde', 'torchvision'}

|

cm_global.pip_blacklist = {'torch', 'torchaudio', 'torchsde', 'torchvision'}

|

||||||

cm_global.pip_downgrade_blacklist = ['torch', 'torchaudio', 'torchsde', 'torchvision', 'transformers', 'safetensors', 'kornia']

|

cm_global.pip_downgrade_blacklist = ['torch', 'torchaudio', 'torchsde', 'torchvision', 'transformers', 'safetensors', 'kornia']

|

||||||

|

|

||||||

cm_global.pip_overrides = {}

|

if sys.version_info < (3, 13):

|

||||||

|

cm_global.pip_overrides = {'numpy': 'numpy<2'}

|

||||||

|

else:

|

||||||

|

cm_global.pip_overrides = {}

|

||||||

|

|

||||||

if os.path.exists(os.path.join(manager_util.comfyui_manager_path, "pip_overrides.json")):

|

if os.path.exists(os.path.join(manager_util.comfyui_manager_path, "pip_overrides.json")):

|

||||||

with open(os.path.join(manager_util.comfyui_manager_path, "pip_overrides.json"), 'r', encoding="UTF-8", errors="ignore") as json_file:

|

with open(os.path.join(manager_util.comfyui_manager_path, "pip_overrides.json"), 'r', encoding="UTF-8", errors="ignore") as json_file:

|

||||||

@@ -66,7 +69,7 @@ def check_comfyui_hash():

|

|||||||

repo = git.Repo(comfy_path)

|

repo = git.Repo(comfy_path)

|

||||||

core.comfy_ui_revision = len(list(repo.iter_commits('HEAD')))

|

core.comfy_ui_revision = len(list(repo.iter_commits('HEAD')))

|

||||||

core.comfy_ui_commit_datetime = repo.head.commit.committed_datetime

|

core.comfy_ui_commit_datetime = repo.head.commit.committed_datetime

|

||||||

except Exception:

|

except:

|

||||||

print('[bold yellow]INFO: Frozen ComfyUI mode.[/bold yellow]')

|

print('[bold yellow]INFO: Frozen ComfyUI mode.[/bold yellow]')

|

||||||

core.comfy_ui_revision = 0

|

core.comfy_ui_revision = 0

|

||||||

core.comfy_ui_commit_datetime = 0

|

core.comfy_ui_commit_datetime = 0

|

||||||

@@ -82,7 +85,7 @@ def read_downgrade_blacklist():

|

|||||||

try:

|

try:

|

||||||

import configparser

|

import configparser

|

||||||

config = configparser.ConfigParser(strict=False)

|

config = configparser.ConfigParser(strict=False)

|

||||||

config.read(context.manager_config_path)

|

config.read(core.manager_config.path)

|

||||||

default_conf = config['default']

|

default_conf = config['default']

|

||||||

|

|

||||||

if 'downgrade_blacklist' in default_conf:

|

if 'downgrade_blacklist' in default_conf:

|

||||||

@@ -90,7 +93,7 @@ def read_downgrade_blacklist():

|

|||||||

items = [x.strip() for x in items if x != '']

|

items = [x.strip() for x in items if x != '']

|

||||||

cm_global.pip_downgrade_blacklist += items

|

cm_global.pip_downgrade_blacklist += items

|

||||||

cm_global.pip_downgrade_blacklist = list(set(cm_global.pip_downgrade_blacklist))

|

cm_global.pip_downgrade_blacklist = list(set(cm_global.pip_downgrade_blacklist))

|

||||||

except Exception:

|

except:

|

||||||

pass

|

pass

|

||||||

|

|

||||||

|

|

||||||

@@ -105,7 +108,7 @@ class Ctx:

|

|||||||

self.no_deps = False

|

self.no_deps = False

|

||||||

self.mode = 'cache'

|

self.mode = 'cache'

|

||||||

self.user_directory = None

|

self.user_directory = None

|

||||||

self.custom_nodes_paths = [os.path.join(context.comfy_base_path, 'custom_nodes')]

|

self.custom_nodes_paths = [os.path.join(core.comfy_base_path, 'custom_nodes')]

|

||||||

self.manager_files_directory = os.path.dirname(__file__)

|

self.manager_files_directory = os.path.dirname(__file__)

|

||||||

|

|

||||||

if Ctx.folder_paths is None:

|

if Ctx.folder_paths is None:

|

||||||

@@ -143,14 +146,17 @@ class Ctx:

|

|||||||

if os.path.exists(extra_model_paths_yaml):

|

if os.path.exists(extra_model_paths_yaml):

|

||||||

utils.extra_config.load_extra_path_config(extra_model_paths_yaml)

|

utils.extra_config.load_extra_path_config(extra_model_paths_yaml)

|

||||||

|

|

||||||

context.update_user_directory(user_directory)

|

core.update_user_directory(user_directory)

|

||||||

|

|

||||||

if os.path.exists(context.manager_pip_overrides_path):

|

if os.path.exists(core.manager_pip_overrides_path):

|

||||||

with open(context.manager_pip_overrides_path, 'r', encoding="UTF-8", errors="ignore") as json_file:

|

with open(core.manager_pip_overrides_path, 'r', encoding="UTF-8", errors="ignore") as json_file:

|

||||||

cm_global.pip_overrides = json.load(json_file)

|

cm_global.pip_overrides = json.load(json_file)

|

||||||

|

|

||||||

if os.path.exists(context.manager_pip_blacklist_path):

|

if sys.version_info < (3, 13):

|

||||||

with open(context.manager_pip_blacklist_path, 'r', encoding="UTF-8", errors="ignore") as f:

|

cm_global.pip_overrides = {'numpy': 'numpy<2'}

|

||||||

|

|

||||||

|

if os.path.exists(core.manager_pip_blacklist_path):

|

||||||

|

with open(core.manager_pip_blacklist_path, 'r', encoding="UTF-8", errors="ignore") as f:

|

||||||

for x in f.readlines():

|

for x in f.readlines():

|

||||||

y = x.strip()

|

y = x.strip()

|

||||||

if y != '':

|

if y != '':

|

||||||

@@ -163,15 +169,15 @@ class Ctx:

|

|||||||

|

|

||||||

@staticmethod

|

@staticmethod

|

||||||

def get_startup_scripts_path():

|

def get_startup_scripts_path():

|

||||||

return os.path.join(context.manager_startup_script_path, "install-scripts.txt")

|

return os.path.join(core.manager_startup_script_path, "install-scripts.txt")

|

||||||

|

|

||||||

@staticmethod

|

@staticmethod

|

||||||

def get_restore_snapshot_path():

|

def get_restore_snapshot_path():

|

||||||

return os.path.join(context.manager_startup_script_path, "restore-snapshot.json")

|

return os.path.join(core.manager_startup_script_path, "restore-snapshot.json")

|

||||||

|

|

||||||

@staticmethod

|

@staticmethod

|

||||||

def get_snapshot_path():

|

def get_snapshot_path():

|

||||||

return context.manager_snapshot_path

|

return core.manager_snapshot_path

|

||||||

|

|

||||||

@staticmethod

|

@staticmethod

|

||||||

def get_custom_nodes_paths():

|

def get_custom_nodes_paths():

|

||||||

@@ -438,11 +444,8 @@ def show_list(kind, simple=False):

|

|||||||

flag = kind in ['all', 'cnr', 'installed', 'enabled']

|

flag = kind in ['all', 'cnr', 'installed', 'enabled']

|

||||||

for k, v in unified_manager.active_nodes.items():

|

for k, v in unified_manager.active_nodes.items():

|

||||||

if flag:

|

if flag:

|

||||||

cnr = unified_manager.cnr_map.get(k)

|

cnr = unified_manager.cnr_map[k]

|

||||||

if cnr:

|

processed[k] = "[ ENABLED ] ", cnr['name'], k, cnr['publisher']['name'], v[0]

|

||||||

processed[k] = "[ ENABLED ] ", cnr['name'], k, cnr['publisher']['name'], v[0]

|

|

||||||

else:

|

|

||||||

processed[k] = None

|

|

||||||

else:

|

else:

|

||||||

processed[k] = None

|

processed[k] = None

|

||||||

|

|

||||||

@@ -462,11 +465,8 @@ def show_list(kind, simple=False):

|

|||||||

continue

|

continue

|

||||||

|

|

||||||

if flag:

|

if flag:

|

||||||

cnr = unified_manager.cnr_map.get(k) # NOTE: can this be None if removed from CNR after installed

|

cnr = unified_manager.cnr_map[k]

|

||||||

if cnr:

|

processed[k] = "[ DISABLED ] ", cnr['name'], k, cnr['publisher']['name'], ", ".join(list(v.keys()))

|

||||||

processed[k] = "[ DISABLED ] ", cnr['name'], k, cnr['publisher']['name'], ", ".join(list(v.keys()))

|

|

||||||

else:

|

|

||||||

processed[k] = None

|

|

||||||

else:

|

else:

|

||||||

processed[k] = None

|

processed[k] = None

|

||||||

|

|

||||||

@@ -475,11 +475,8 @@ def show_list(kind, simple=False):

|

|||||||

continue

|

continue

|

||||||

|

|

||||||

if flag:

|

if flag:

|

||||||

cnr = unified_manager.cnr_map.get(k)

|

cnr = unified_manager.cnr_map[k]

|

||||||

if cnr:

|

processed[k] = "[ DISABLED ] ", cnr['name'], k, cnr['publisher']['name'], 'nightly'

|

||||||

processed[k] = "[ DISABLED ] ", cnr['name'], k, cnr['publisher']['name'], 'nightly'

|

|

||||||

else:

|

|

||||||

processed[k] = None

|

|

||||||

else:

|

else:

|

||||||

processed[k] = None

|

processed[k] = None

|

||||||

|

|

||||||

@@ -499,12 +496,9 @@ def show_list(kind, simple=False):

|

|||||||

continue

|

continue

|

||||||

|

|

||||||

if flag:

|

if flag:

|

||||||

cnr = unified_manager.cnr_map.get(k)

|

cnr = unified_manager.cnr_map[k]

|

||||||

if cnr:

|

ver_spec = v['latest_version']['version'] if 'latest_version' in v else '0.0.0'

|

||||||

ver_spec = v['latest_version']['version'] if 'latest_version' in v else '0.0.0'

|

processed[k] = "[ NOT INSTALLED ] ", cnr['name'], k, cnr['publisher']['name'], ver_spec

|

||||||

processed[k] = "[ NOT INSTALLED ] ", cnr['name'], k, cnr['publisher']['name'], ver_spec

|

|

||||||

else:

|

|

||||||

processed[k] = None

|

|

||||||

else:

|

else:

|

||||||

processed[k] = None

|

processed[k] = None

|

||||||

|

|

||||||

@@ -670,7 +664,7 @@ def install(

|

|||||||

cmd_ctx.set_channel_mode(channel, mode)

|

cmd_ctx.set_channel_mode(channel, mode)

|

||||||

cmd_ctx.set_no_deps(no_deps)

|

cmd_ctx.set_no_deps(no_deps)

|

||||||

|

|

||||||

pip_fixer = manager_util.PIPFixer(manager_util.get_installed_packages(), comfy_path, context.manager_files_path)

|

pip_fixer = manager_util.PIPFixer(manager_util.get_installed_packages(), comfy_path, core.manager_files_path)

|

||||||

for_each_nodes(nodes, act=install_node, exit_on_fail=exit_on_fail)

|

for_each_nodes(nodes, act=install_node, exit_on_fail=exit_on_fail)

|

||||||

pip_fixer.fix_broken()

|

pip_fixer.fix_broken()

|

||||||

|

|

||||||

@@ -708,7 +702,7 @@ def reinstall(

|

|||||||

cmd_ctx.set_channel_mode(channel, mode)

|

cmd_ctx.set_channel_mode(channel, mode)

|

||||||

cmd_ctx.set_no_deps(no_deps)

|

cmd_ctx.set_no_deps(no_deps)

|

||||||

|

|

||||||

pip_fixer = manager_util.PIPFixer(manager_util.get_installed_packages(), comfy_path, context.manager_files_path)

|

pip_fixer = manager_util.PIPFixer(manager_util.get_installed_packages(), comfy_path, core.manager_files_path)

|

||||||

for_each_nodes(nodes, act=reinstall_node)

|

for_each_nodes(nodes, act=reinstall_node)

|

||||||

pip_fixer.fix_broken()

|

pip_fixer.fix_broken()

|

||||||

|

|

||||||

@@ -762,7 +756,7 @@ def update(

|

|||||||

if 'all' in nodes:

|

if 'all' in nodes:

|

||||||

asyncio.run(auto_save_snapshot())

|

asyncio.run(auto_save_snapshot())

|

||||||

|

|

||||||

pip_fixer = manager_util.PIPFixer(manager_util.get_installed_packages(), comfy_path, context.manager_files_path)

|

pip_fixer = manager_util.PIPFixer(manager_util.get_installed_packages(), comfy_path, core.manager_files_path)

|

||||||

|

|

||||||

for x in nodes:

|

for x in nodes:

|

||||||

if x.lower() in ['comfyui', 'comfy', 'all']:

|

if x.lower() in ['comfyui', 'comfy', 'all']:

|

||||||

@@ -863,7 +857,7 @@ def fix(

|

|||||||

if 'all' in nodes:

|

if 'all' in nodes:

|

||||||

asyncio.run(auto_save_snapshot())

|

asyncio.run(auto_save_snapshot())

|

||||||

|

|

||||||

pip_fixer = manager_util.PIPFixer(manager_util.get_installed_packages(), comfy_path, context.manager_files_path)

|

pip_fixer = manager_util.PIPFixer(manager_util.get_installed_packages(), comfy_path, core.manager_files_path)

|

||||||

for_each_nodes(nodes, fix_node, allow_all=True)

|

for_each_nodes(nodes, fix_node, allow_all=True)

|

||||||

pip_fixer.fix_broken()

|

pip_fixer.fix_broken()

|

||||||

|

|

||||||

@@ -1140,7 +1134,7 @@ def restore_snapshot(

|

|||||||

print(f"[bold red]ERROR: `{snapshot_path}` is not exists.[/bold red]")

|

print(f"[bold red]ERROR: `{snapshot_path}` is not exists.[/bold red]")

|

||||||

exit(1)

|

exit(1)

|

||||||

|

|

||||||

pip_fixer = manager_util.PIPFixer(manager_util.get_installed_packages(), comfy_path, context.manager_files_path)

|

pip_fixer = manager_util.PIPFixer(manager_util.get_installed_packages(), comfy_path, core.manager_files_path)

|

||||||

try:

|

try:

|

||||||

asyncio.run(core.restore_snapshot(snapshot_path, extras))

|

asyncio.run(core.restore_snapshot(snapshot_path, extras))

|

||||||

except Exception:

|

except Exception:

|

||||||

@@ -1172,7 +1166,7 @@ def restore_dependencies(

|

|||||||

total = len(node_paths)

|

total = len(node_paths)

|

||||||

i = 1

|

i = 1

|

||||||

|

|

||||||

pip_fixer = manager_util.PIPFixer(manager_util.get_installed_packages(), comfy_path, context.manager_files_path)

|

pip_fixer = manager_util.PIPFixer(manager_util.get_installed_packages(), comfy_path, core.manager_files_path)

|

||||||

for x in node_paths:

|

for x in node_paths:

|

||||||

print("----------------------------------------------------------------------------------------------------")

|

print("----------------------------------------------------------------------------------------------------")

|

||||||

print(f"Restoring [{i}/{total}]: {x}")

|

print(f"Restoring [{i}/{total}]: {x}")

|

||||||

@@ -1191,7 +1185,7 @@ def post_install(

|

|||||||

):

|

):

|

||||||

path = os.path.expanduser(path)

|

path = os.path.expanduser(path)

|

||||||

|

|

||||||

pip_fixer = manager_util.PIPFixer(manager_util.get_installed_packages(), comfy_path, context.manager_files_path)

|

pip_fixer = manager_util.PIPFixer(manager_util.get_installed_packages(), comfy_path, core.manager_files_path)

|

||||||

unified_manager.execute_install_script('', path, instant_execution=True)

|

unified_manager.execute_install_script('', path, instant_execution=True)

|

||||||

pip_fixer.fix_broken()

|

pip_fixer.fix_broken()

|

||||||

|

|

||||||

@@ -1231,11 +1225,11 @@ def install_deps(

|

|||||||

with open(deps, 'r', encoding="UTF-8", errors="ignore") as json_file:

|

with open(deps, 'r', encoding="UTF-8", errors="ignore") as json_file:

|

||||||

try:

|

try:

|

||||||

json_obj = json.load(json_file)

|

json_obj = json.load(json_file)

|

||||||

except Exception:

|

except:

|

||||||

print(f"[bold red]Invalid json file: {deps}[/bold red]")

|

print(f"[bold red]Invalid json file: {deps}[/bold red]")

|

||||||

exit(1)

|

exit(1)

|

||||||

|

|

||||||

pip_fixer = manager_util.PIPFixer(manager_util.get_installed_packages(), comfy_path, context.manager_files_path)

|

pip_fixer = manager_util.PIPFixer(manager_util.get_installed_packages(), comfy_path, core.manager_files_path)

|

||||||

for k in json_obj['custom_nodes'].keys():

|

for k in json_obj['custom_nodes'].keys():

|

||||||

state = core.simple_check_custom_node(k)

|

state = core.simple_check_custom_node(k)

|

||||||

if state == 'installed':

|

if state == 'installed':

|

||||||

@@ -1292,10 +1286,6 @@ def export_custom_node_ids(

|

|||||||

print(f"{x['id']}@unknown", file=output_file)

|

print(f"{x['id']}@unknown", file=output_file)

|

||||||

|

|

||||||

|

|

||||||

def main():

|

|

||||||

app()

|

|

||||||

|

|

||||||

|

|

||||||

if __name__ == '__main__':

|

if __name__ == '__main__':

|

||||||

sys.argv[0] = re.sub(r'(-script\.pyw|\.exe)?$', '', sys.argv[0])

|

sys.argv[0] = re.sub(r'(-script\.pyw|\.exe)?$', '', sys.argv[0])

|

||||||

sys.exit(app())

|

sys.exit(app())

|

||||||

@@ -1,49 +0,0 @@

|

|||||||

# ComfyUI-Manager: Core Backend (glob)

|

|

||||||

|

|

||||||

This directory contains the Python backend modules that power ComfyUI-Manager, handling the core functionality of node management, downloading, security, and server operations.

|

|

||||||

|

|

||||||

## Directory Structure

|

|

||||||

- **glob/** - code for new cacheless ComfyUI-Manager

|

|

||||||

- **legacy/** - code for legacy ComfyUI-Manager

|

|

||||||

|

|

||||||

## Core Modules

|

|

||||||

- **manager_core.py**: The central implementation of management functions, handling configuration, installation, updates, and node management.

|

|

||||||

- **manager_server.py**: Implements server functionality and API endpoints for the web interface to interact with the backend.

|

|

||||||

|

|

||||||

## Specialized Modules

|

|

||||||

|

|

||||||

- **share_3rdparty.py**: Manages integration with third-party sharing platforms.

|

|

||||||

|

|

||||||

## Architecture

|

|

||||||

|

|

||||||

The backend follows a modular design pattern with clear separation of concerns:

|

|

||||||

|

|

||||||

1. **Core Layer**: Manager modules provide the primary API and business logic

|

|

||||||

2. **Utility Layer**: Helper modules provide specialized functionality

|

|

||||||

3. **Integration Layer**: Modules that connect to external systems

|

|

||||||

|

|

||||||

## Security Model

|

|

||||||

|

|

||||||

The system implements a comprehensive security framework with multiple levels:

|

|

||||||

|

|

||||||

- **Block**: Highest security - blocks most remote operations

|

|

||||||

- **High**: Allows only specific trusted operations

|

|

||||||

- **Middle**: Standard security for most users

|

|

||||||

- **Normal-**: More permissive for advanced users

|

|

||||||

- **Weak**: Lowest security for development environments

|

|

||||||

|

|

||||||

## Implementation Details

|

|

||||||

|

|

||||||

- The backend is designed to work seamlessly with ComfyUI

|

|

||||||

- Asynchronous task queuing is implemented for background operations

|

|

||||||

- The system supports multiple installation modes

|

|

||||||

- Error handling and risk assessment are integrated throughout the codebase

|

|

||||||

|

|

||||||

## API Integration

|

|

||||||

|

|

||||||

The backend exposes a REST API via `manager_server.py` that enables:

|

|

||||||

- Custom node management (install, update, disable, remove)

|

|

||||||

- Model downloading and organization

|

|

||||||

- System configuration

|

|

||||||

- Snapshot management

|

|

||||||

- Workflow component handling

|

|

||||||

@@ -1,104 +0,0 @@

|

|||||||

import os

|

|

||||||

import logging

|

|

||||||

from aiohttp import web

|

|

||||||

from .common.manager_security import HANDLER_POLICY

|

|

||||||

from .common import manager_security

|

|

||||||

from comfy.cli_args import args

|

|

||||||

|

|

||||||

|

|

||||||

def prestartup():

|

|

||||||

from . import prestartup_script # noqa: F401

|

|

||||||

logging.info('[PRE] ComfyUI-Manager')

|

|

||||||

|

|

||||||

|

|

||||||

def start():

|

|

||||||

logging.info('[START] ComfyUI-Manager')

|

|

||||||

from .common import cm_global # noqa: F401

|

|

||||||

|

|

||||||

if args.enable_manager:

|

|

||||||

if args.enable_manager_legacy_ui:

|

|

||||||

try:

|

|

||||||

from .legacy import manager_server # noqa: F401

|

|

||||||

from .legacy import share_3rdparty # noqa: F401

|

|

||||||

from .legacy import manager_core as core

|

|

||||||

import nodes

|

|

||||||

|

|

||||||

logging.info("[ComfyUI-Manager] Legacy UI is enabled.")

|

|

||||||

nodes.EXTENSION_WEB_DIRS['comfyui-manager-legacy'] = os.path.join(os.path.dirname(__file__), 'js')

|

|

||||||

except Exception as e:

|

|

||||||

print("Error enabling legacy ComfyUI Manager frontend:", e)

|

|

||||||

core = None

|

|

||||||

else:

|

|

||||||

from .glob import manager_server # noqa: F401

|

|

||||||

from .glob import share_3rdparty # noqa: F401

|

|

||||||

from .glob import manager_core as core

|

|

||||||

|

|

||||||

if core is not None:

|

|

||||||

manager_security.is_personal_cloud_mode = core.get_config()['network_mode'].lower() == 'personal_cloud'

|

|

||||||

|

|

||||||

|

|

||||||

def should_be_disabled(fullpath:str) -> bool:

|

|

||||||

"""

|

|

||||||

1. Disables the legacy ComfyUI-Manager.

|

|

||||||

2. The blocklist can be expanded later based on policies.

|

|

||||||

"""

|

|

||||||

if args.enable_manager:

|

|

||||||

# In cases where installation is done via a zip archive, the directory name may not be comfyui-manager, and it may not contain a git repository.

|

|

||||||

# It is assumed that any installed legacy ComfyUI-Manager will have at least 'comfyui-manager' in its directory name.

|

|

||||||

dir_name = os.path.basename(fullpath).lower()

|

|

||||||

if 'comfyui-manager' in dir_name:

|

|

||||||

return True

|

|

||||||

|

|

||||||

return False

|

|

||||||

|

|

||||||

|

|

||||||

def get_client_ip(request):

|

|

||||||

peername = request.transport.get_extra_info("peername")

|

|

||||||

if peername is not None:

|

|

||||||

host, port = peername

|

|

||||||

return host

|

|

||||||

|

|

||||||

return "unknown"

|

|

||||||

|

|

||||||

|

|

||||||

def create_middleware():

|

|

||||||

connected_clients = set()

|

|

||||||

is_local_mode = manager_security.is_loopback(args.listen)

|

|

||||||

|

|

||||||

@web.middleware

|

|

||||||

async def manager_middleware(request: web.Request, handler):

|

|

||||||

nonlocal connected_clients

|

|

||||||

|

|

||||||

# security policy for remote environments

|

|

||||||

prev_client_count = len(connected_clients)

|

|

||||||

client_ip = get_client_ip(request)

|

|

||||||

connected_clients.add(client_ip)

|

|

||||||

next_client_count = len(connected_clients)

|

|

||||||

|

|

||||||